Flyclient is a superlight client design dedicated to PoW blockchains. With a few modifications to the blockchain data structure and an elegant interactive protocol, it allows a light client to verify the validity of the latest block with only a few hundred kilobytes of data transmission, rather than downloading all the block headers. Afterward, the client can verify any transaction in any block with a short proof.

In this post, I will describe how to apply the Flyclient design to Nervos CKB. Henceforth I will assume the readers have already read the Flyclient paper (the proofs are not necessary) or at least watched the talk. The rest of this post will be highly technical and thus contains absolutely no meaningful message to the general audience.

Assumptions and Their Implications

Two Crucial Assumptions

- A light client only looks for the heaviest chain and confirms that a certain transaction is embedded in this chain. The heaviest chain is, by assumption, syntactically valid. In other words, there is no need for a light client to verify whether all the transactions are valid in this chain.

- The light client connects to at least one honest full node.

Based on the second assumption, we immediately have that, when all full nodes send the light client the same latest block or a consistent history, the client only needs to sample blocks from one of them.

Conflict Resolution

A point of discussion: what to do when two full nodes send us conflicting histories?

My suggestion is the following protocol:

- Sample from both chains a series of blocks with the same accumulated difficulty.

- Find the last common block in their replies, use it as the starting point for sampling, and repeat the first step.

Until we find an invalid block in one of the chains.

Set an upper bound on the times of this loop. Once the upper bound is reached, trust the heavier chain.

What to do and What Not to do

Personally, I don’t see why we need non-interactive sampling. It’s an interactive protocol anyway.

A light client should save its latest block so that if it goes offline and comes back online, there is no need to sample from the genesis block. It can sample from the latest block before it went offline, if that block is still among the heaviest chain. Of course, a small upper bound should be applied to the local storage.

There are two challenges when implementing Flyclient on CKB. First, how to ensure a smooth transition to kick-off the MMR. Second, how to design the MMR data structure. We will focus on the second challenge for the rest of this post. The first challenge will be dealt with when some other things are settled (e.g., where to put the MMR root, what kind of fork).

MMR Data Structure

Another point of discussion: do we want the number of blocks of all full MMR nodes to be powers of 2?

At this moment, I don’t think that is necessary. Please let me know if I am wrong. In Nervos CKB, every epoch contains roughly 300 to 1800 blocks. I think it is simpler if we have a certain level of MMR nodes that are dedicated to epoch roots. The benefit is, we can finish all operations regarding difficulty adjustment at the same level, which can simplify the algorithm and data structure design.

Now, what data field to put in the MMR node?

The main idea is, put all five parameters used in the difficulty adjustment in the MMR node so that we can reuse the code of calculating the new difficulty to verify whether the difficulty adjustment is correct!

\hat{H_{i-1}}: the adjusted hash rate of the i-1-th epoch

In the MMR, each leaf node is a block, and we term the level where each node represents an epoch epoch level.

Every MMR node contains:

- h: block/subtree hash—otherwise it is not a Merkle root.

- blockNum: (only applicable to non-leaf nodes) the number of leaf nodes in this subtree

- epochNum: (only applicable to nodes higher than epoch level) number of epochs, including incomplete ones

- w: the accumulated difficulty since the genesis block. Note that uncles do not contribute to the accumulated difficulty.

- deltaT: the time difference between the last block before this subtree (which is not part of this subtree), and the rightmost block in this subtree (which is part of this subtree). This value equals L_i for epoch-level nodes.

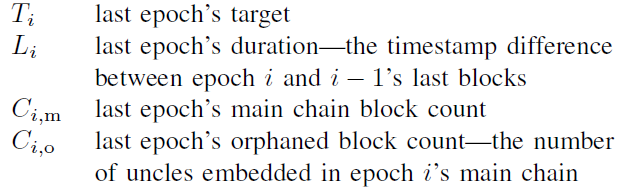

- firstEpochTarget, firstEpochDuration, firstEpochMainChainNum, firstEpochUncleNum:the target, duration, number of main chain blocks, and number of uncles of the first epoch in this subtree. These values equal T_i, L_i, C_{i,m}, C_{i,o}. Note that for all MMR nodes in an epoch, these four values only need to be transmitted once.

- nextTarget, nextMainChainNum: (only applicable when the last block of this subtree is the end of an epoch) the target and main chain block number of the next epoch (not in this subtree). These two values equal T_{i+1} and C_{i+1,m}.

- endAdjustedHashRate: (only applicable when the last block of this subtree is the end of an epoch) \hat{H_i} after the epoch ends.

Again, the benefit of this design is, we have all the inputs we need to re-execute the difficulty adjustment algorithm after every epoch.

Verifying the Validity of Difficulty Adjustment in MMR

This part corresponds to page 18 at the end of Sect. 6.1. The goal is to verify that there is at least one valid difficulty transformation (execution of a series of difficulty adjustments) that is consistent with the current sampling. Once this is verified, many attacks become impossible.

Step 0. verify all leaf nodes’ information is consistent with their epoch-level node, and all epoch-level nodes’ difficulty adjustment results are correct.

Step 1. List all epoch-level MMR nodes. These nodes split the blockchain into many segments. The blockchain is valid if and only if all segments are valid. Now we focus on one segment.

Assuming there are n epochs in this segment, the Flyclient paper checks three groups of conditions targeting NC:

- The difficulty change is between $\tau^n$and 1/\tau^n, where \tau is the dampening factor。

- The upper and lower bounds of the accumulated difficulty w.

- The upper and lower bounds of the total time.

Here is my solution:

The dampening factor.

In NC-Max, the adjusted hash rate estimation \hat{H_i} cannot change faster than \tau_1. So we compute \hat{H_i} for the first and last epoch of the segment, which allows us to check this condition.

The accumulated difficulty.

The total accumulated difficulty in epoch (i+1) is \hat{H_i}*L_{\rm ideal}. Among with, at least half is used on the main chain, at most all of them are used in the main chain. So the upper bound of the accumulated difficulty is to assume all of it is in the main chain; the lower bound is to assume only half of it is in the main chain.

Some further explanations on Equation 2 and 3 in the paper:

-

If the ending difficulty is higher than the starting difficulty, then

- the upper bound of the total difficulty is, first spend (n+k)/2 epochs raising the difficulty, then spend (n-k)/2 epochs lowering the difficulty;

- the lower bound of the total difficulty is, first spend (n-k)/2 epochs lowering the difficulty, then spend (n+k)/2 epochs raising the difficulty;

-

The other direction is very similar.

The total time.

The Flyclient paper didn’t say. Our solution is simple: compute the average epoch duration of each segment. If a certain segment’s average epoch duration deviates from the expectation too much (shorter than 3hr40min or longer than 4hr20min), then sample again from that segment, and verify everything in this suspicious segment.